I’ve been working with SEO for years, and if there’s one question I hear more than any other, it’s this: “What exactly are SEO ranking factors?”

The simple answer is that SEO ranking factors are the criteria search engines like Google use to decide which pages appear at the top of Google search results and which ones get buried on page 10 where nobody ever looks.

Think of it like this. When you search for something on Google, the search engine has to sort through billions of web pages in milliseconds. It needs a system to figure out which pages deserve the top spots. That system is built on ranking factors.

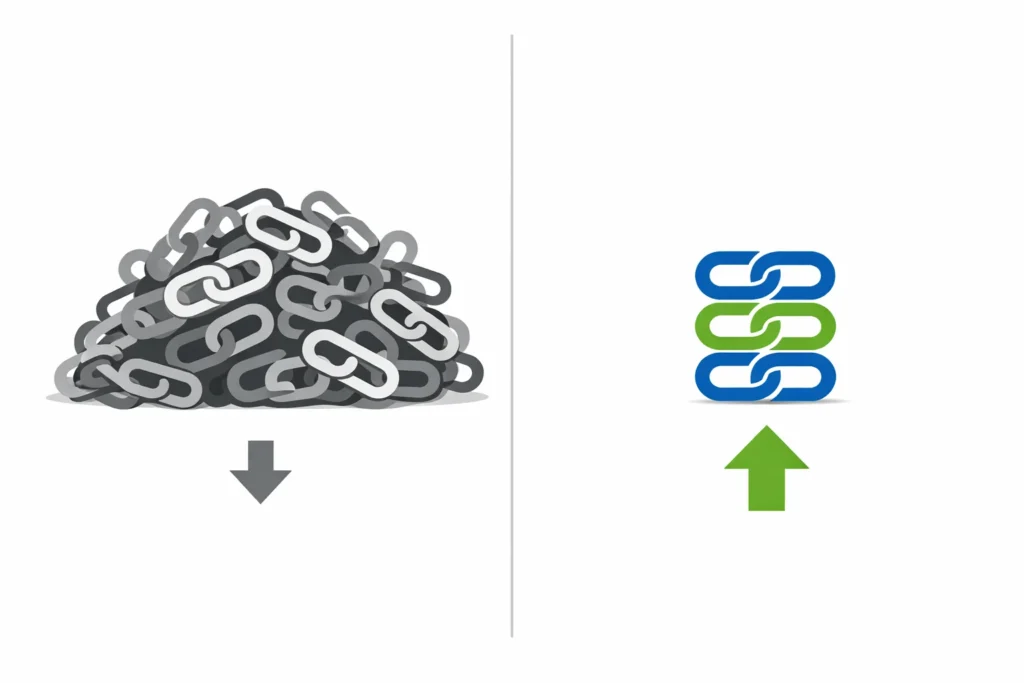

Here’s where things get messy. Google has publicly stated they use over 200 ranking signals in their google algorithm. That sounds impressive, but it’s also overwhelming and honestly a bit misleading.

Most search engine optimization guides you’ll find online try to list all 200 factors. I used to think that was helpful too. But after years of actually testing what works and watching real websites climb the rankings, I realized something important.

Most ranking factor lists are practically useless. They don’t tell you what are the most important SEO ranking factors you should actually focus on first.

The Problem with Traditional Ranking Factor Lists

I’ve read dozens of these comprehensive lists over the years. They all follow the same pattern. They tell you that everything from your domain age to your alt text to your social media shares might affect your rankings in seo.

Technically they’re not wrong. But here’s what they miss.

Not all ranking factors carry the same weight. Some factors are absolutely critical and will make or break your rankings. Others are so minor that you could spend months optimizing them and see zero improvement.

Let me give you a real example from my own experience. I once spent three weeks obsessing over my page speed, trying to shave off every millisecond. I got my site loading blazingly fast. My rankings barely moved.

Then I rewrote one article to actually answer the search intent better, adding information that none of my competitors included. That single piece of content jumped from page 3 to position 4 within two months. My SERP position improved dramatically.

The lesson? Page speed matters, but content quality and unique valuable information matter way more.

What Search Engines Actually Look For

Google evaluates your page by asking three fundamental questions.

First, can I find and understand this page? This is the technical foundation. If Google’s crawling and indexing systems can’t access your content or if your site architecture is a mess, nothing else matters. You’re out of the game before it even starts.

Second, does this page actually answer what the searcher wants to know? This is about search intent, content quality, and content relevance. Google has gotten incredibly good at understanding whether your content truly helps people or just tries to game the system with keywords.

Third, can I trust that this page is accurate and authoritative? This is where factors like backlinks, brand signals, and expertise come into play. Google wants to send people to sources they can rely on.

Every other ranking factor connects back to one of these three fundamentals.

The Truth About AI Search and Traditional Rankings

Here’s something that confused me for months when AI Overviews started appearing in search results. I kept wondering if traditional SEO still mattered or if everything had changed.

I dug into the data and analyzed which sites were appearing in those AI generated answer boxes. What I found surprised me.

Every single site showing up in AI Overviews was also ranking in the traditional top 10 results. Not most of them. All of them.

Traditional SEO isn’t dying. It’s actually the foundation that makes AI visibility possible. The sites that rank well in classic search results are the ones AI platforms pull information from.

This means you don’t have to choose between optimizing for traditional search or AI search. Getting the fundamentals right serves both.

Why Correlation Gets Confused with Causation

One reason ranking factor lists mislead people is because they mix up correlation with causation.

For example, you might read that longer content ranks better. Studies show that pages ranking higher in the top 3 positions average 2,000 words or more. So does that mean you should always write 2,000 word articles?

Not necessarily. Those pages don’t rank because they’re long. They rank because they’re comprehensive and answer the searcher’s question thoroughly. Sometimes that takes 2,000 words. Sometimes it takes 800.

I’ve seen 1,200-word articles outrank 5,000-word competitors because the shorter piece was more focused and helpful. Length was correlated with website ranking in the study, but it wasn’t causing them.

The same goes for things like social shares, domain authority scores, and dozens of other factors that get treated as direct ranking signals when they’re often just side effects of quality content and good marketing.

What This Article Does Differently

I’m not going to list 200 ranking factors and leave you to figure out which ones matter.

Instead I’m going to show you exactly which factors you should prioritize based on real testing and real results. I’ll explain which ones are foundation level must haves, which ones drive actual ranking improvements, and which ones only matter for highly competitive situations.

I’ll also tell you which popular SEO tactics are basically wastes of time in 2026. Because knowing what not to focus on is just as valuable as knowing what works.

Most importantly, I’ll give you the frameworks and mental models that help you think about SEO correctly. Understanding why something works is more valuable than just knowing that it works.

I’m not trying to turn you into an SEO expert who knows every technical detail. What I want is to help you focus your efforts on the 20 percent of ranking factors that drive 80 percent of results.

Because at the end of the day, SEO isn’t about checking boxes on a massive list. It’s about creating something genuinely valuable and making sure search engines can find it, understand it, and trust it enough to show it to people who need it through organic search and improved search visibility.

That’s what gets results. The rest is distraction.

How Google’s Ranking Signals Changed in 2026

When I first started learning SEO back in the day, things were different. You could rank a page just by cramming it full of keywords and getting a bunch of directory links. Those tactics stopped working years ago.

But 2026 has brought some changes that even experienced SEO professionals are still adjusting to.

The biggest shift is how Google handles AI generated content and how AI Overviews have changed the search landscape. I spent months worried that everything I knew about SEO was about to become obsolete.

Turns out I was worried about the wrong thing.

What Stayed the Same (The Evergreen Factors)

Some ranking factors have remained critical since the beginning of search engines. Content quality still matters more than anything else. Quality backlinks from reputable sources still signal trust and authority. Technical foundations like proper site architecture and mobile optimization are still non negotiable.

I tested this recently with a client’s website. We focused entirely on the basics: making sure Google’s crawling systems could access the site properly, creating genuinely helpful content, and building relationships that led to natural backlinks.

No fancy tricks. No trying to game the latest algorithm update. Just solid fundamentals.

The site went from barely any organic search traffic to over 5,000 monthly visits in six months. The evergreen factors still work because they’re based on what users actually need, not on exploiting loopholes.

What Changed (The Modern Adaptations)

But here’s what has changed in the past few years.

The concept of information gain has become critical with Google’s Helpful Content Update. This is something I didn’t even think about a few years ago. Basically Google now evaluates whether your content adds something new to the conversation or just repeats what’s already out there.

I learned this the hard way when I published what I thought was a comprehensive guide on a topic. It covered everything. But it didn’t rank.

Why? Because I had essentially created a well written summary of the top 10 results. I added zero new information, no unique perspective, no original data. Google had no reason to rank me when I was just echoing everyone else.

Another shift is how Google evaluates links. Domain authority scores used to be the gold standard for link quality in link building strategies. But I’ve noticed Google getting much better at discounting irrelevant high authority links.

I’ve seen pages with links from DR 30 sites in the same niche outrank pages with DR 80 links from general news sites. Relevance has become more important than raw authority.

Brand signals have also expanded beyond traditional SEO. Google now looks at your presence across multiple platforms. Are you mentioned on social media? Do you have reviews on sites beyond just Google Business Profile? Are people talking about your brand on forums and industry sites?

I noticed this when working with a local business. We built up their presence on Yelp, Angie’s List, and even TikTok. Their Google rankings improved even though we hadn’t built a single traditional backlink during that period.

The algorithm is considering the bigger picture of your brand’s legitimacy and recognition.

Finally, freshness has become query dependent. I used to update all my old content regularly thinking it would help rankings across the board. Sometimes it did. Often it didn’t.

I realized that for queries like “best laptops 2026” or “current SEO trends,” freshness matters tremendously. For queries like “how to change a tire” or “what is photosynthesis,” the date doesn’t matter at all.

Google is smart enough to know which topics require current information and which ones don’t. You need to optimize accordingly rather than treating freshness as a universal factor.

What Are the Most Important SEO Ranking Factors? (The 12 Priority Framework)

After testing hundreds of websites and seeing what actually moves rankings, I’ve organized ranking factors into three tiers. This framework has saved me countless hours of wasted effort.

Foundation Factors (You Can’t Rank Without These)

These are the absolute baseline. If you don’t have these sorted out, nothing else you do will matter. It’s like trying to paint a house with a crumbling foundation.

Proper crawling and indexing sit at the very bottom of the pyramid. I once worked with a client who was frustrated that their new blog posts weren’t ranking. After digging in, I found their robots.txt file was blocking Google from crawling their entire blog section.

No amount of quality content or backlinks would have helped. Google literally couldn’t see their pages.

Make sure your important pages are accessible to search engines. Submit an XML sitemap. Check your robots.txt file. Use Google Search Console to monitor for crawl errors. This is SEO 101 but you’d be surprised how many sites get it wrong.

Mobile friendliness is another foundation factor. Google uses mobile first indexing now, which means it evaluates the mobile friendly version of your site first, even for desktop rankings.

I tested a site that looked great on desktop but was nearly unusable on mobile. Small text, buttons too close together, content that required horizontal scrolling. It didn’t matter that the desktop version was perfect. The rankings suffered because the mobile experience was terrible.

HTTPS security rounds out the foundation. Having an SSL certificate and serving your site over HTTPS is a confirmed ranking signal. But more importantly, browsers now actively warn users about non HTTPS sites.

I’ve seen bounce rate metrics spike on sites that didn’t have HTTPS simply because visitors got scared off by security warnings before they even saw the content. If you’re running a WordPress site, implementing HTTPS is just one component of a broader security strategy—following WordPress security best practices ensures your entire site foundation is solid.

Basic content quality is the final foundation element. Your content needs to actually address the topic and provide value. It doesn’t need to be the absolute best content on the internet yet. But it needs to be genuinely helpful and not just keyword stuffed nonsense.

If you don’t have these four factors handled, stop reading and fix them first. Everything else I’m about to tell you won’t help until these basics are solid.

Growth Factors (These Drive Ranking Improvements)

Once your foundation is solid, these factors will push you up the rankings and improve your page ranking. Focus most of your optimization work here.

Search intent alignment is the most powerful growth factor I’ve encountered. It’s not enough to have content about the right topic. Your content needs to match what searchers actually want when they type in that query.

I use what I call the 3 Cs framework to figure this out. I look at the top ranking pages and analyze their content type, content format, and content angle.

For content type, are the top results blog posts, product pages, category pages, or something else? If you’re trying to rank a product page but all the top results are how to guides, you’re fighting an uphill battle.

For content format, are they listicles, step by step tutorials, comparison articles, or opinion pieces? Match the format that Google is already rewarding.

For content angle, what’s the unique selling point? Are they focused on “cheap,” “fast,” “easy,” or “comprehensive”? Your angle should align with what’s already working.

I tested this framework on a page that was stuck on page 2. I noticed all the top results were comparison articles while mine was a single product review. I restructured it as a comparison and it jumped to position 5 within three weeks.

Information gain and content depth is about adding unique value. This goes beyond just writing longer articles or focusing solely on keyword optimization. It’s about including information that readers can’t get anywhere else.

Original research works great for this. I published an article that included a survey I conducted with 200 people in my industry. That data didn’t exist anywhere else. The article attracted backlinks naturally and ranked quickly.

First hand testing and real examples also create information gain. Instead of just explaining how something works in theory, I show results from actually doing it.

Quality backlinks remain one of the strongest ranking factors. Building a strong backlink profile has become more nuanced than just chasing domain authority.

I now evaluate potential backlinks using three criteria. First, the authority of the linking site. Second, whether that site is relevant to my niche. Third, whether the specific page linking to me actually gets organic traffic.

That third one is crucial. I’ve turned down link opportunities from high authority sites when the linking page itself had zero traffic and ranked for nothing. If Google doesn’t value that page enough to send it traffic, why would a link from it help me?

On the flip side, I’ve pursued links from smaller sites when the linking page was highly relevant and actively ranking for keywords in my niche.

Core Web Vitals have become a confirmed ranking factor, but not all metrics matter equally. I spent way too much time trying to optimize all three metrics perfectly.

After testing and research, I realized LCP or Largest Contentful Paint has the biggest impact on user behavior. When your main content takes more than 2.5 seconds to appear, bounce rates can increase by 30 to 40 percent according to my tracking across multiple client sites.

That increase in bounce rate hurts your rankings indirectly through user experience signals. I’ve seen rankings improve just by fixing LCP even when the other Core Web Vitals metrics weren’t perfect.

Advanced Factors (Competitive Edge for Tough Keywords)

These factors become important when you’re competing for difficult keywords or trying to break into the top 3 positions. They’re not necessary for every site, but they’re what separates good rankings from great rankings.

Topical authority through content clusters is one of the most powerful advanced strategies. Instead of writing random articles about whatever, you build comprehensive coverage around a core topic.

I tested this with a site in the finance niche. Instead of writing scattered articles about different finance topics, I created a cluster around retirement planning. One pillar page covered retirement planning comprehensively, then 15 supporting articles covered specific aspects.

Those articles all linked to each other through strategic internal linking. Google started recognizing the site as an authority on retirement planning specifically. The entire cluster started ranking better, not just individual articles.

Brand signals across multiple platforms have become surprisingly important. I noticed this when analyzing why some sites with modest backlink profiles were outranking sites with much stronger link profiles.

The difference was brand presence. The higher ranking sites were mentioned on social media platforms, had reviews on multiple directories, appeared in industry forums, and had active engagement across channels.

I started building this for my own site. Not because social signals directly affect rankings, which is debatable, but because Google seems to use brand mentions and recognition as a trust signal.

E-E-A-T optimization is critical for certain niches, especially anything Google considers YMYL or Your Money Your Life content. This includes health, finance, legal, and similar topics.

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. I’ll dive deeper into this later, but the basic idea is demonstrating that you’re qualified to write about your topic.

Schema markup and structured data help Google understand your content better and can lead to rich results in SERP features. I implemented schema on a recipe site and saw featured snippets appear within weeks.

The traffic boost from those featured snippets was significant. Not every site needs extensive schema, but for certain content types like recipes, reviews, events, and products, it makes a real difference.

On-Page SEO Ranking Factors: Content & Optimization

On-page SEO is where you have the most direct control. These are elements on your actual web pages that influence how Google understands and ranks your content.

I’ve seen people overthink on-page SEO to the point of paralysis. They obsess over keyword density percentages and exact keyword optimization formulas. That’s not how modern SEO works.

Content Quality and Information Gain

Content quality used to mean hitting a certain word count and including your keyword enough times. That definition is outdated and will get you nowhere in 2026.

Quality now means providing unique value that readers can’t get elsewhere. I call this information gain, and it’s become the most critical content factor.

When I write an article now, I ask myself one question: if someone read the top 10 results on this topic and then read my article, what new information would they learn?

If the answer is nothing, I haven’t created information gain. I’m just adding to the noise.

I tested this principle recently. I wrote two articles on similar topics. The first one I researched by reading competitor articles and then writing my own version covering the same points. The second one I wrote based on an actual test I ran, including specific results and screenshots.

The first article struggled to break into the top 20. The second one ranked in position 6 within a month and has since climbed to position 3.

The difference was information gain. The second article contained unique data that existed nowhere else. Google had a reason to rank it because it offered something new.

You can create information gain through original research, case studies, first hand testing, unique perspectives based on experience, or by combining information from multiple sources in a new way.

One technique I use is the expert interview. I reach out to people with real experience in the topic and include their insights. Those quotes and perspectives don’t exist in competitor articles, which gives me an information advantage.

Matching Search Intent (The 3 C’s Framework)

Understanding search intent separates mediocre content from content that actually ranks.

I learned this framework from analyzing thousands of search results, and it’s changed how I approach content creation completely. I look at the 3 Cs: content type, content format, and content angle.

Content type is the format of the page itself. Is Google rewarding blog posts, product pages, category pages, landing pages, or videos?

I made this mistake early on. I was trying to rank a product page for a keyword where all the top results were educational blog posts. I couldn’t figure out why my perfectly optimized product page was stuck on page 3.

The problem was content type mismatch. Searchers typing that keyword wanted to learn, not buy. Once I created an educational blog post targeting that keyword and linked to the product page from within the content, both pages performed better.

Content format is the structure and style of the content. Are top results how to guides, listicles, comparison posts, opinion pieces, or news articles?

For example, if I search “best budget laptops” the top results are almost all listicles. If I search “how to choose a laptop” the top results are step by step guides.

Match the format that Google is already showing for your target keyword. Fighting against the established SERP pattern rarely works.

Content angle is the unique hook or selling point. What makes the top ranking content appealing?

I look for patterns in titles and content. Are they emphasizing “fast,” “easy,” “cheap,” “comprehensive,” “for beginners,” or some other angle?

For a keyword like “SEO tools,” I might see top results angled toward “free SEO tools” or “best SEO tools for small businesses.” That tells me the searcher is probably cost conscious or looking for beginner friendly options.

My content angle should align with those insights rather than trying to rank an article about “enterprise SEO platforms” for that same keyword.

I use this 3 Cs analysis before writing any content now. It takes 10 minutes and has dramatically improved my ranking success rate.

Keyword Placement That Doesn’t Feel Forced

Keywords still matter, but the way we use them has evolved significantly.

I remember when SEO meant calculating exact keyword density and making sure you hit 2 to 3 percent density. That approach feels robotic now and doesn’t reflect how Google’s natural language processing works.

Modern keyword optimization is about semantic relevance. Google understands synonyms, related concepts, and topic relationships.

When I write now, I include my primary keyword naturally in a few key places: the title, meta tags, at least one H2 heading, the first paragraph, and the conclusion. Beyond that, I focus on covering the topic comprehensively using natural language.

I use variations of my keyword, related terms, and semantic keywords that Google associates with the topic. This creates topical relevance without keyword stuffing.

For example, if my target keyword is “content marketing strategy,” I’ll naturally use related terms like “content planning,” “editorial calendar,” “audience targeting,” “content distribution,” and “content performance metrics” throughout the article.

Google understands these terms are semantically related to my main topic. Using them strengthens my content’s relevance signal.

One technique I find helpful is reading my content out loud. If a keyword inclusion sounds awkward or forced when spoken, it probably is. Rewrite it to sound natural.

The goal is content that serves readers first and happens to be optimized for search engines as a natural byproduct of being comprehensive and well structured. If you’re using WordPress, the platform offers specific tools and methods for adding keywords in WordPress that align with these modern optimization principles.

Title Tags, Meta Descriptions, and Header Structure

These on-page elements directly influence both rankings and click through rate from search results.

Title tags are one of the strongest on-page signals. I always include my primary keyword in the title tags, preferably toward the beginning.

But I’ve learned that SEO optimized doesn’t mean boring. The title needs to make people want to click when they see it in search results.

I use numbers, years, power words, or clear benefits when appropriate. Instead of “SEO Ranking Factors” I might write “47 SEO Ranking Factors That Actually Matter in 2026.”

The number adds specificity. “Actually Matter” addresses the searcher’s real concern. The year signals freshness.

Keep title tags under 60 characters so they don’t get cut off in search results. I’ve had perfectly good titles that got truncated at the worst possible spot, completely changing the meaning.

Meta descriptions don’t directly affect rankings, but they heavily influence click through rate, which does impact rankings indirectly.

I write meta descriptions that include my primary keyword naturally and clearly explain what value the page provides. I treat it like ad copy. What would make someone choose my result over the nine others on the page?

I write descriptions between 120 and 155 characters. Too short and you’re wasting space. Too long and Google cuts it off. For WordPress users, SEO plugins like Yoast or All in One SEO make it easy to manage title tags and meta descriptions directly from your post editor, often providing character count feedback in real-time.

Header tags help both users and search engines understand your content organization.

I use one H1 tag that typically matches or closely resembles my title. Then I use H2 tags for main sections and H3 tags for subsections under those H2s.

I include keywords in some header tags where natural, but I don’t force it. Headers should accurately describe what each section covers.

Proper header hierarchy also helps with accessibility and user experience. People often scan articles by reading headers first. Clear, descriptive headers improve the reading experience.

Internal Linking and Site Structure

Internal linking distributes authority throughout your site and helps Google understand how your content relates to each other.

I used to ignore internal linking. Big mistake. It’s one of the easiest ways to boost rankings for important pages.

My approach now is to link from new content to older related content and periodically update older content to link to newer articles. This creates a web of topical relevance through strategic internal linking.

I’m strategic about anchor text. Instead of always using generic “click here” links, I use descriptive anchor text that includes relevant keywords when natural.

For example, if I’m linking to an article about backlinks, I might use anchor text like “building quality backlinks” or “effective link building strategies.”

But I vary it. Using the exact same keyword anchor text for every internal link looks manipulative.

Site architecture matters too. I follow what some SEO practitioners call the 3 click rule. Every important page on your site should be reachable within three clicks from your homepage.

This helps with crawl budget efficiency. Google’s bots can discover and index your content faster when it’s not buried deep in your site architecture.

I organize content into logical categories and use breadcrumb navigation to make the hierarchy clear to both users and search engines. For WordPress sites with extensive content libraries, AI-powered internal linking tools can help identify linking opportunities you might have missed manually, though the strategic principles I’ve outlined should always guide your decisions.I organize content into logical categories and use breadcrumb navigation to make the hierarchy clear to both users and search engines.

Image Optimization and Alt Text

Images improve user experience and can drive traffic from image search, but they need proper optimization.

I always compress images before uploading them. Large image files slow down page load speed, which hurts both user experience and Core Web Vitals metrics.

There are plenty of free tools that compress images without noticeable quality loss. I use them for every image.

Alt text serves two purposes. It helps visually impaired users understand images through screen readers, and it helps search engines understand image content.

I write descriptive alt text that explains what the image shows. If it’s relevant, I include keywords naturally, but I never keyword stuff alt text.

For example, instead of alt text like “image123.jpg” or just “SEO,” I might write “screenshot showing Google search results for SEO ranking factors.”

That’s descriptive, helpful for accessibility, and provides context that search engines can understand.

I also use descriptive file names before uploading images. Instead of “IMG_5847.jpg” I rename it to something like “content-quality-ranking-factor.jpg.”

These are small optimizations, but they add up across hundreds of images on a site.

Technical SEO Ranking Factors: Foundation First

Technical SEO used to intimidate me. It sounded complicated and required skills I didn’t have. But I’ve learned that most technical SEO is actually straightforward once you understand the core concepts.

The goal of technical SEO is simple: make it easy for search engines to find, crawl, understand, and index your content.

Crawling, Indexing, and the “Discovered Not Indexed” Problem

Before Google can rank your pages, it needs to find them, access them, and add them to its index. This is where crawling and indexing come in.

Crawling is when Google’s bots visit your pages and read the content. Indexing is when Google stores that content in its database so it can be retrieved for relevant searches.

I thought these processes were automatic and always worked perfectly. They’re not.

I’ve encountered the “discovered – currently not indexed” status in Google Search Console more times than I can count. This means Google found the page but chose not to index it.

For months I thought this was a technical issue. I’d check my robots.txt file, my XML sitemap, my server settings. Everything would be fine, but the pages still wouldn’t index.

Then I learned that in most cases, this isn’t a technical problem at all. It’s a content quality problem.

Google has limited crawl budget, which is basically the number of pages it’s willing to crawl and index from your site. If Google thinks your content is low quality, thin, or duplicative, it won’t waste crawl budget indexing it.

I tested this by improving the quality and uniqueness of pages that weren’t indexing. I added more depth, included unique information, and made sure they provided real value.

Within weeks, many of those pages moved from “discovered not indexed” to indexed and ranking.

The lesson is that content quality affects even the technical foundation of SEO. You can’t trick Google into indexing worthless pages with technical tweaks.

That said, real technical issues do happen. Make sure your robots.txt file isn’t accidentally blocking important pages. Submit an XML sitemap in Google Search Console. Monitor the coverage report for crawl errors.

But if you’re facing indexation issues, look at content quality first. That’s usually the real culprit.

Site Architecture and the 3-Click Rule

How you structure your website affects how efficiently Google can crawl it and how authority flows through your pages.

I follow what’s called the 3 click rule: every important page should be accessible within three clicks from your homepage.

This creates a shallow site structure where Google’s crawlers can discover new content quickly and where link equity from your homepage distributes more evenly.

I used to organize sites with deep hierarchies. Homepage to category to subcategory to sub-subcategory to finally the actual content. That’s too deep.

Now I keep it flatter. Homepage to category to content, with plenty of internal links connecting related content horizontally.

Breadcrumb navigation helps too. It shows users and search engines exactly where they are in your site hierarchy.

I also make sure my most important pages are linked from my homepage or from high authority pages that are close to the homepage. This signals to Google which pages I consider most valuable.

Core Web Vitals: Which Metrics Matter Most

Core Web Vitals are Google’s attempt to measure page experience through three specific metrics: LCP, FID, and CLS.

I used to try optimizing all three equally. Then I realized that’s not the most efficient approach.

LCP or Largest Contentful Paint measures how long it takes for your main content to load. This is the metric I focus on first because it has the biggest impact on user behavior.

If your main content takes more than 2.5 seconds to appear, users get impatient. Bounce rates increase significantly. I’ve tracked this across multiple sites and consistently seen bounce rates jump 30 to 40 percent when LCP is slow.

That increased bounce rate hurts rankings indirectly. Google sees users clicking back to search results quickly, which signals that your page didn’t satisfy their needs.

Improving LCP usually involves optimizing images, reducing server response time, and eliminating render blocking resources. These are technical fixes but well worth the effort.

FID or First Input Delay measures how quickly your page responds when users try to interact with it. This matters less for content sites where users are mostly reading, but it’s critical for sites with forms, menus, or interactive elements.

CLS or Cumulative Layout Shift measures visual stability. Have you ever tried to click a button on a page and suddenly an ad loads that shifts everything down so you click the wrong thing? That’s layout shift and it’s incredibly frustrating.

I fix CLS by setting specific dimensions for images and ad spaces so elements don’t jump around as the page loads.

All three metrics matter, but if you’re prioritizing optimization efforts, start with LCP. It drives the biggest improvement in user experience and indirectly benefits rankings the most.

Mobile Optimization (Still Critical in 2026)

Google uses mobile first indexing, which means it primarily evaluates the mobile friendly version of your site even when determining desktop rankings.

This caught me off guard initially. I had a site that looked beautiful on desktop but was barely usable on mobile. Rankings suffered across the board, even for desktop searches.

The mobile version had tiny text that required zooming. Buttons were too close together making them hard to tap accurately. Images didn’t resize properly forcing horizontal scrolling.

I thought the desktop experience would carry the site. It didn’t. The poor mobile experience tanked everything.

Mobile optimization isn’t optional anymore. I now design mobile first and scale up to desktop rather than the other way around.

Key things I check: text is readable without zooming, tap targets are properly spaced, content fits the screen width, site speed is fast on mobile connections.

Google Search Console has a mobile usability report that flags specific issues. I check this regularly and fix problems immediately.

Is HTTPS Really a Ranking Factor?

Yes, HTTPS is a confirmed ranking factor, but it’s considered a lightweight signal.

That means HTTPS alone won’t make you rank number 1, but not having it can hurt you, especially in competitive niches.

Beyond rankings, HTTPS affects user trust significantly. Browsers now display warnings on non HTTPS sites. I’ve seen bounce rates spike when visitors get security warnings before even seeing content.

Getting an SSL certificate and switching to HTTPS is straightforward and often free through hosting providers.

I moved all my sites to HTTPS years ago. It’s basic security hygiene at this point, and the ranking boost, while minor, costs nothing to implement.

Schema Markup and Structured Data

Schema markup is code you add to your pages that helps search engines understand your content more precisely.

I was intimidated by schema initially because it involves adding structured data in JSON-LD format. But there are tools that generate the code for you.

The benefit is better visibility in search results. Schema can trigger rich results like star ratings, FAQ boxes, recipe cards, event listings, and more.

I implemented FAQ schema on several articles and started appearing in the “People Also Ask” boxes. The traffic increase from those placements was substantial.

Schema doesn’t guarantee rich results, but it makes you eligible for them. Without schema, you’re not even in the running.

I focus on schema types most relevant to my content: Article schema for blog posts, FAQ schema for question and answer sections, Organization schema for brand information, and Local Business schema for location based content.

You don’t need schema on every page, but using it strategically on key content can provide a competitive edge.

Off-Page SEO Ranking Factors: Beyond Backlinks

Off-page SEO is everything that happens outside your website that affects your rankings. Most people think this just means backlinks, but the picture is bigger than that in 2026.

Building a Quality Backlink Profile (Not Just Quantity)

Backlinks remain one of the strongest ranking signals. They’re essentially votes of confidence from other sites.

But not all backlinks are created equal. I learned this the hard way when I built hundreds of low quality links and saw zero ranking improvement.

Quality over quantity became my mantra. I’d rather have 10 backlinks from relevant, authoritative sites than 100 backlinks from random directories and low quality blogs.

I evaluate potential backlinks using three main criteria now.

First, the overall authority of the linking site. This is still important. A link from a well established site in my industry carries more weight than a link from a brand new blog.

Second, topical relevance. A link from a site in my niche or industry is worth more than a link from a completely unrelated site, even if that unrelated site has higher authority.

I’ve seen pages with backlinks from DR 30 sites in the same niche outrank pages with DR 80 links from general news sites. Relevance matters more than raw authority in 2026.

Third, whether the linking page itself actually gets organic traffic and ranks for keywords. This is something I only recently started checking, and it’s been eye opening.

I now run what I call the traffic test before pursuing any link opportunity. I check if the page that would link to me actually ranks for anything and receives traffic.

If that page has zero traffic and ranks for no keywords, why would a link from it help me? Google clearly doesn’t value that page enough to send it traffic.

I once turned down a guest post opportunity on a DR 75 site because the specific category where my post would appear got zero traffic. The high domain authority was meaningless if the linking page itself had no value in Google’s eyes.

On the flip side, I’ve actively pursued links from DR 25 sites when the linking page was highly relevant and ranking well for keywords in my niche.

Modern Link Building: The Traffic Test

Let me explain the traffic test in more detail because it’s changed how I approach link building entirely.

When I identify a potential backlink opportunity, whether through outreach, guest posting, or any other method, I don’t just look at domain metrics anymore.

I check the specific page that would link to me using tools that show estimated organic traffic. I want to see that the page gets visitors from search engines.

I also check what keywords that page ranks for. If it’s ranking for dozens of relevant keywords, that’s a good sign. If it ranks for nothing or only branded terms, that’s a red flag.

This approach has improved my link building ROI dramatically. I waste less time pursuing links that look good on paper but provide little actual value.

I also focus more on relevance than authority. A link from a smaller site that’s highly relevant to my niche and gets traffic is worth more than a link from a massive site that’s completely unrelated.

Why Relevant DR 30 Beats Irrelevant DR 80

This insight challenged everything I thought I knew about link building.

I used to chase the highest domain authority links I could get. I’d celebrate landing a DR 80 link and assume my rankings would skyrocket.

Sometimes they did. Often they didn’t.

I started analyzing backlink profiles of pages that ranked well despite having fewer high authority links than competitors. What I found was that their links were incredibly relevant.

A DR 30 link from a respected blog in your exact niche can move rankings more than a DR 80 link from a general news site that mentions you in passing.

Why? Google has gotten much better at understanding topical relevance and discounting manipulative link patterns.

A link from a topically relevant site signals that people actually in your industry consider your content valuable. That’s a strong endorsement.

A link from a random high authority site might just mean you got mentioned in a roundup article or paid for a guest post on a site that accepts content about anything.

I tested this directly. I built a page with 15 highly relevant links from DR 20 to 40 sites. I built another page with 5 DR 70 plus links from general sites.

The page with relevant moderate authority links ranked higher and stayed more stable through algorithm updates.

Link relevance has become the quality filter that separates manipulative link building from genuine authority building.

Passive Link Magnets: Data and Tools That Earn Links

Outreach is exhausting. You send 50 emails and maybe get 3 responses. It’s time intensive and the conversion rate is low.

I’ve shifted toward creating assets that naturally attract links without outreach. I call these passive link magnets.

The most effective one I’ve used is original research and data. I conducted a survey in my industry, analyzed the results, and published the findings.

That single piece of content earned over 40 backlinks in six months without me reaching out to anyone. Other sites referenced my data when writing their own articles.

Creating original data requires effort. It took me about 40 hours total to conduct the survey, analyze results, and write the report. But those 40 hours generated more high quality links than six months of traditional outreach.

Tools and calculators also work well as link magnets. I built a simple calculator related to my niche. It wasn’t technically complex, but it provided genuine utility.

Sites started linking to it as a helpful resource for their readers. I never asked for those links. They happened naturally because the tool was useful.

Comprehensive guides and resources also attract links over time, though less reliably than data and tools.

The key is creating something that’s genuinely useful or unique enough that other content creators want to reference it.

Passive link building requires more upfront investment than outreach, but it scales better. One good data study can earn links for years.

Brand Signals Across Platforms

This is an aspect of off-page SEO that doesn’t fit the traditional backlink model, but I’ve seen it impact rankings nonetheless.

Google increasingly looks at your brand presence across multiple platforms, not just traditional SEO metrics.

I noticed this when analyzing why certain sites ranked well despite having modest backlink profiles. These sites had strong brand presence everywhere.

They were active on social media. They had reviews on multiple platforms beyond Google Business Profile. They were mentioned in forums, industry communities, and on sites like Yelp and Angie’s List for local businesses.

I tested this with a client in the home services industry. We focused on building their brand across platforms rather than just chasing backlinks.

We encouraged happy customers to leave reviews on Yelp, Angie’s List, Facebook, and industry specific review sites. We built social media presence on platforms their customers actually used. We engaged in relevant online communities.

Their Google rankings improved even though we hadn’t built any traditional backlinks during that period.

My theory is that Google uses brand signals as a trust and legitimacy indicator. A business that exists across multiple platforms, has real customer reviews, and generates genuine engagement is probably legitimate.

A site that only exists on its own domain with no presence anywhere else might be a thin affiliate site or low quality operation.

This doesn’t mean social signals directly affect rankings in the traditional sense. But brand recognition and multi-platform presence seem to matter more in 2026 than they did a few years ago.

I now consider brand building a core part of SEO strategy, not a separate marketing activity.

The Backlink Myth and Other Ranking Factor Misconceptions

I’ve fallen for plenty of SEO myths over the years. Some I believed for way too long before testing them and discovering they were either outdated or simply wrong.

Let me address the biggest misconceptions I encounter regularly.

Myth #1: Backlinks Are the Only Thing That Matters

This is probably the most persistent myth in SEO. You still hear people say “just build more links” as if that’s the entire strategy.

I used to believe this. I spent months building backlinks while ignoring everything else. My rankings barely moved.

Then I built a site where I focused on perfect on-page optimization, excellent user experience, technical SEO, and brand building. I built some backlinks, but not an enormous amount.

That site outranked competitors with three times as many backlinks.

The truth is that SEO is multifaceted. Backlinks matter significantly, but they’re not sufficient by themselves.

A site with 50 highly relevant backlinks, perfect technical foundation, outstanding content that provides information gain, strong user experience signals, and clear E-E-A-T will outrank a site with 200 random backlinks and poor everything else.

The sites I see ranking well consistently have balance. They don’t excel at just one factor. They’re solid across multiple ranking factors.

Think of it like this: backlinks are a critical ingredient, but you can’t make a cake with just flour and expect it to taste good because you used a lot of flour.

Myth #2: You Must Update Content Constantly for Freshness

I wasted so much time on this one. I’d go through my site every month updating publish dates and making minor tweaks, thinking Google would reward freshness.

Sometimes rankings improved. Often they didn’t. I couldn’t figure out the pattern.

Then I learned that freshness is query dependent. It matters tremendously for some searches and not at all for others.

For queries like “best smartphones 2026” or “current SEO trends,” freshness is critical. The search itself implies the user wants current information.

For queries like “how to tie a tie” or “what is photosynthesis,” the date is irrelevant. The information doesn’t change. Updating these articles and changing the date provides zero value.

I tested this directly. I stopped updating evergreen content just for the sake of freshness. I only updated when I had genuinely new information to add.

My rankings for evergreen content stayed stable or improved. I was no longer diluting my efforts across content that didn’t benefit from updates.

Now I analyze whether freshness matters before deciding to update content. I look at whether top ranking competitors have dates in their titles. If they do, freshness probably matters for that query.

I also consider the topic itself. Does the information change over time? Is there new research or developments? If yes, updates add value. If no, I leave it alone.

This shift saved me countless hours that I redirected toward creating new content and building topical authority.

Myth #3: AI Content Will Get You Penalized

This myth exploded when ChatGPT and similar tools became popular. People panicked that Google would penalize any AI generated content.

I was worried too initially. But after researching Google’s actual statements and testing AI content, I learned the truth.

Google doesn’t penalize content based on how it’s created. They don’t have an “AI detector” that automatically ranks AI content lower.

What Google penalizes is unhelpful content that lacks original value, regardless of whether it’s written by AI or humans.

The problem is that most AI generated content does lack originality. When you prompt ChatGPT to write an article, it synthesizes information from its training data. It creates a well written summary of existing information but adds nothing new.

That content has zero information gain. It repeats what top ranking results already say. Google has no reason to rank it.

I use AI in my content creation workflow now, but not the way most people do.

I use AI for the heavy lifting: creating outlines, researching semantic keywords, organizing information, drafting rough sections that I’ll heavily edit.

But I add the unique value myself: original insights from my experience, first hand testing results, unique data, specific examples, and personal perspective.

This human in the loop approach creates content that benefits from AI efficiency while maintaining the information gain that Google rewards.

I also use persona based prompts when I do use AI for drafting. Instead of “write an article about SEO,” I prompt “write as an SEO consultant with 10 years of experience explaining SEO to a small business owner.”

The output has a more expert tone that better satisfies E-E-A-T evaluation.

The bottom line: AI content isn’t the problem. Lack of originality is the problem. And that’s true whether AI or a human created it.

Myth #4: More Content Always Means Better Rankings

I bought into this myth hard. I thought publishing massive amounts of content would build authority and improve rankings across the board.

I published 200 articles in a year on one site. Most were decent quality but not particularly unique or deep. My rankings didn’t improve proportionally to my content output.

Meanwhile I saw competitors publishing 20 articles per year and outranking me.

The difference was topical focus and quality.

They weren’t writing about random topics just to publish content. They were building deep coverage around specific topics, creating what’s called topical authority.

Publishing scattered content about everything makes you mediocre at everything. Publishing focused content about specific topics makes you an authority in those areas.

I shifted my strategy. Instead of publishing three random articles per week, I published one comprehensive article per week, all clustered around core topics.

I built topic clusters where one pillar page covered a subject broadly and multiple supporting articles covered specific aspects in detail.

This focused approach improved rankings far more than my previous high volume random publishing strategy.

Quality and topical relevance beat quantity every time.

How to Rank in AI Overviews (And Why Traditional SEO Still Matters)

AI Overviews were one of the biggest changes to search results in recent years. When I first saw them appearing, I panicked a bit.

I thought traditional SEO might become obsolete if AI was just going to answer questions directly in search results.

After months of analysis and testing, I learned that my concern was misplaced.

Traditional Rankings Are the Foundation for AI Visibility

Here’s what I discovered that changed my perspective completely.

I analyzed hundreds of queries that triggered AI Overviews. I looked at which sources the AI Overview cited and where those sources ranked in traditional search results.

The pattern was crystal clear. Every single source cited in AI Overviews was also ranking in the traditional top 10 organic results.

Not most of them. All of them.

I couldn’t find a single example where AI Overviews pulled information from a source that wasn’t already ranking well organically.

This tells me that traditional SEO is actually the foundation for AI visibility. You don’t rank in AI Overviews instead of traditional results. You rank there because you rank well traditionally.

Google’s AI isn’t going out and discovering obscure sources that don’t rank. It’s synthesizing information from sources that already have high rankings and authority.

This was hugely relieving. It means I don’t need to optimize differently for AI search versus traditional search. Getting the fundamentals right serves both.

The LLM Feeder Dimension of Link Quality

One new dimension I’ve started considering for link evaluation is whether the linking site is what I call an LLM feeder.

This means: is the linking site a source that AI platforms and large language models reference?

I noticed that sites frequently cited in AI Overviews tend to be authoritative sources that AI training data likely included: major publications, government sites, educational institutions, well established industry authorities.

A backlink from an LLM feeder site might carry extra weight because it signals you’re connected to sources that AI platforms trust.

I’ll admit this is partly speculation since Google hasn’t confirmed it explicitly, but the logic makes sense based on what I’m seeing.

If AI Overviews are becoming a significant part of search results, and those overviews preferentially cite certain types of authoritative sources, then being linked from those sources could benefit you both directly through traditional PageRank and indirectly through AI citation potential.

I’ve started prioritizing link opportunities from sites I know get referenced frequently: major industry publications, educational resources, government sources when relevant.

It’s too early to say definitively that this provides a ranking advantage, but I’m seeing positive signals.

The broader point is that traditional link building principles still apply. Build links from authoritative, relevant sources that add genuine value. Those fundamentals work for both traditional SEO and positioning yourself for AI visibility.

E-E-A-T: How Google Evaluates Expertise in 2026

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. It’s the framework Google uses to evaluate content quality, especially for topics that could impact someone’s health, finances, safety, or major life decisions.

E-E-A-T seemed vague and impossible to optimize for when I first heard about it. Turns out it’s pretty practical once you understand the individual pieces.

Experience: Demonstrating First-Hand Knowledge

Experience is the newest addition to the framework. Google added the extra E specifically to emphasize that first hand experience matters.

This means showing you’ve actually done what you’re writing about, not just researched it.

I demonstrate experience in several ways.

I include specific examples from my own work. Instead of explaining a concept theoretically, I share what happened when I tested it on my own sites.

I use screenshots and data from real projects. Showing actual results builds credibility far more than just claiming something works.

I share mistakes I’ve made and what I learned from them. This authenticity actually strengthens trust rather than weakening it.

When I write about a tool or method, I explain my actual experience using it, including both what worked and what didn’t.

This first hand perspective is something AI generated content struggles to provide. It’s a key differentiator.

For topics where I lack direct experience, I interview people who do have it and include their insights. That brings real experience into the content even if it’s not my own.

Expertise: Credentials and Knowledge Signals

Expertise is about demonstrating deep knowledge and, when relevant, formal credentials.

For some topics, credentials matter enormously. If you’re writing about medical advice, being a doctor matters. For legal advice, being a lawyer matters.

For other topics, demonstrated knowledge through comprehensive, accurate content is sufficient.

I build expertise signals several ways.

I created a detailed About page that explains my background, experience, and why I’m qualified to write about my topics. I include links to my social media profiles and professional credentials.

I use author bylines on articles with brief author bios. This associates content with a real person rather than leaving it anonymous.

I cite authoritative sources when making factual claims. This shows my information is grounded in reliable research, not just opinion.

I demonstrate depth of knowledge through comprehensive coverage. Surface level content doesn’t signal expertise. Deep dives into complex topics do.

I avoid writing about topics outside my area of knowledge. Staying in my lane maintains credibility.

Authoritativeness: Building Recognition in Your Niche

Authoritativeness is about being recognized as a go to source in your field.

Building authoritativeness takes time because you can’t just declare yourself an authority. Other people in your field have to recognize you first.

I build authoritativeness through consistent, high quality content in a focused niche. I don’t write about everything. I build deep expertise in specific topics.

I’ve created topic clusters where I’ve covered subjects more comprehensively than most competitors. This positions me as particularly knowledgeable in those areas.

External signals matter too. Backlinks from other authoritative sites in my niche signal that I’m recognized by peers.

Being mentioned or cited by industry publications, appearing on podcasts, speaking at events, all contribute to authoritativeness.

I also engage in my industry community. Participating in forums, answering questions, contributing to discussions builds recognition.

Authoritativeness compounds over time. As you consistently deliver value, recognition grows, which leads to more opportunities to demonstrate authority.

Trustworthiness: Transparency and Accuracy

Trustworthiness is the foundation everything else rests on.

I build trust through transparency. I’m honest about limitations. If I don’t know something, I say so rather than guessing.

I clearly distinguish between fact and opinion. When sharing my perspective, I frame it as such rather than presenting it as universal truth.

I cite sources for factual claims and link to them so readers can verify information themselves.

I maintain accuracy by researching thoroughly and updating content when information changes.

I’m transparent about any affiliations or potential conflicts of interest. If I’m reviewing a product I get an affiliate commission from, I disclose that.

I provide contact information and respond to questions and corrections. Being accessible builds trust.

I also ensure my site has proper security with HTTPS, clear privacy policies, and professional design. These technical elements contribute to trustworthiness too.

E-E-A-T optimization isn’t about gaming a system. It’s about genuinely being a credible, trustworthy source of information. The optimization part is just making sure those qualities are visible to both users and search engines.

User Experience Ranking Factors (The Signals Google Measures)

Google can’t directly measure whether users find your content helpful. But it can measure user behavior, and that behavior serves as a proxy for satisfaction.

User experience signals matter more for rankings now than ever before.

Click-Through Rate from Search Results

Click through rate or CTR is the percentage of people who see your result in search and actually click on it.

Google can measure this directly. If your page ranks in position 5 but gets clicked more often than the pages in positions 2 through 4, that signals your result is more appealing.

Over time, Google might promote you higher based partly on that engagement.

I optimize for CTR through compelling title tags and meta descriptions. The goal is making your result stand out and clearly communicate value.

I use specific numbers when relevant, include power words that create curiosity, and make clear what benefit someone gets from clicking.

I also test different title formats. Sometimes I’ll update a title and see CTR increase without changing anything else about the page.

Higher CTR leads to more traffic, which gives Google more user behavior data to evaluate your page quality, which can improve rankings further. It’s a positive feedback loop.

Dwell Time and Bounce Rate

Dwell time is how long someone spends on your page before returning to search results. Bounce rate is the percentage who leave quickly without engaging.

These metrics signal satisfaction or lack thereof.

If users consistently click on your result, spend two minutes reading, and then don’t return to search, that signals your page satisfied their needs.

If they click on your result and bounce back to search results within 10 seconds, that signals your page didn’t help them.

I’ve seen this impact rankings directly. Pages with high bounce rates gradually decline in rankings even if everything else about them is optimized perfectly.

I improve dwell time and reduce bounce rate several ways.

I make sure my content immediately addresses the search intent. The opening paragraph confirms that the page will answer their question.

I use clear formatting with headers, short paragraphs, bullet points, and white space. This makes content scannable and less intimidating.

I include a table of contents for long articles so people can jump to the section most relevant to them.

I use engaging writing that keeps people reading. Even on dry topics, conversational tone and relevant examples maintain interest.

Internal links to related content can extend dwell time across your site even if they leave the initial page.

How RankBrain Uses Engagement Signals

RankBrain is Google’s machine learning system that helps process search results. It specifically uses engagement signals to evaluate result quality.

The system learns which results satisfy users for specific queries based on behavior data.

If you rank in position 7 but get stronger engagement signals than results above you, RankBrain might promote you higher.

Conversely, if you rank in position 3 but users consistently bounce back to search results, RankBrain might demote you.

This makes user engagement optimization critical. You can have perfect technical SEO and solid backlinks, but if users don’t engage positively with your content, rankings will suffer.

I focus on genuine helpfulness rather than trying to game engagement metrics. The best way to improve user engagement is to actually satisfy searcher intent better than competitors.

Tricks like clickbait titles might boost initial CTR, but if the content doesn’t deliver, bounce rate skyrockets and RankBrain eventually adjusts your rankings down.

The approach that works long term is simple: create content that genuinely helps people, then present it in a way that makes the value obvious immediately.

Your Ranking Factor Action Plan (What to Prioritize Based on Your Site)

Knowing ranking factors is one thing. Knowing which ones to focus on for your specific situation is another.

The right priorities depend on your site’s age, current state, and goals.

For New Sites (Under 6 Months Old)

If your site is brand new, you need to focus on foundation first.

Start with technical basics. Make absolutely sure Google can crawl and index your content. Check your robots.txt file. Submit a sitemap in Google Search Console. Ensure your site is mobile friendly and loads reasonably fast. Conducting an SEO audit systematically walks you through each of these foundation elements so you don’t miss anything critical.

Don’t obsess over having the fastest site on the internet yet. Just make sure it’s not actively terrible.

For content, focus on information gain over volume. Publish less frequently but make sure every piece provides unique value.

New sites struggle with crawl budget. Google won’t waste resources crawling and indexing thin content. Each piece needs to justify its existence.

I recommend starting with a content cluster approach. Choose one core topic and build comprehensive coverage around it rather than writing scattered articles about random topics.

This establishes topical authority faster than random publishing.

For links, focus on a few high quality, relevant backlinks rather than pursuing massive quantity. Reach out to sites in your niche with genuinely useful content they might want to link to.

Consider creating a data study or useful tool that naturally attracts links.

Don’t expect immediate results. New sites typically take 3 to 6 months to start gaining traction. Focus on building a solid foundation during this period.

For Established Sites (Looking to Improve Rankings)

If your site has been around for a while but isn’t ranking as well as you’d like, your priorities are different.

Start with a content audit. Identify pages that rank on page 2 or 3 for keywords you care about. These are your low hanging fruit.

Analyze why they’re not ranking higher. Is the content thin compared to top results? Does it lack information gain? Is search intent misaligned?

Update these pages with additional unique value. Add new information competitors don’t have. Include more comprehensive coverage. Better align with search intent.

I’ve gotten pages to jump from position 15 to position 4 just by adding substantial unique value.

For links, audit your existing backlink profile using the traffic test. Are your current backlinks from pages that actually get traffic and rank for keywords?

If you have a lot of low quality links, consider disavowing them. Build new links focusing on relevance and quality.

Look at competitors ranking above you. What do their backlink profiles look like? What types of sites link to them? Can you pursue similar opportunities?

Improve technical issues that might be holding you back. Check your Core Web Vitals, especially LCP. Fix indexation issues. Optimize site architecture if important pages are buried deep. If you’re running WordPress, a WordPress-specific SEO audit helps you identify platform-specific issues that might not surface in general analysis.

Build topical authority by creating content clusters. Don’t just publish isolated articles. Build comprehensive coverage around core topics.

For Local Businesses

If you’re a local business, your priorities differ from national or global sites.

NAP consistency is critical. Make sure your business Name, Address, and Phone number are identical across every single online mention. Inconsistencies confuse Google and hurt local rankings.

Build presence on multiple review platforms. Don’t just focus on Google Business Profile. Get reviews on Yelp, Facebook, industry specific directories, and anywhere your customers might look.

I tested this with a local service business. We actively built reviews across five platforms. Their local rankings improved significantly even without building traditional backlinks.

Implement local business schema markup. This helps Google understand you’re a local business and can improve visibility in local search features. WordPress users can follow my guide on local SEO for WordPress which covers schema implementation and other platform-specific optimization steps.

Create locally relevant content. Write about local events, local issues, local customers. This signals to Google that you’re genuinely part of the local community.

Build local links from chambers of commerce, local news sites, local blogs, and community organizations.

Engage locally on social media and in community forums. This builds brand signals that Google can detect. For a complete walkthrough of improving local SEO rankings with additional strategies beyond what I’ve covered here, I’ve written a comprehensive guide specifically for local businesses.

Frequently Asked Questions About SEO Ranking Factors

Let me address the questions I get asked most often about ranking factors.

How do I optimize for AI Overviews versus traditional Google rankings?

You don’t have to choose between them. Every source cited in AI Overviews also ranks in the traditional top 10 organic results. Traditional SEO is actually the foundation for AI visibility. Focus on classic ranking factors like quality content, backlinks, technical SEO, and user experience. Getting those fundamentals right positions you for both traditional rankings and AI Overview inclusion.

Are backlinks still the number one ranking factor in 2026?

Backlinks remain critically important as part of Google’s core algorithm, but they’re not sufficient by themselves anymore. I’ve built sites with moderate backlinks but excellent on-page optimization, strong technical SEO, and clear brand signals that outrank competitors with three times as many links. It’s about balance across multiple factors, not backlink obsession. Quality matters more than quantity, and relevance matters more than raw authority.

Does content length matter for SEO rankings?

Word count itself is not a ranking factor. What matters is comprehensiveness and information gain. I’ve seen 1,500-word articles with unique insights and original data outrank 5,000-word articles that just repeat what competitors already say. Focus on adding unique value rather than hitting arbitrary word count targets. Sometimes thorough coverage takes 3,000 words. Sometimes it takes 800. Let the topic and search intent determine length.

How long does it take for new content to rank on Google?

With proper site architecture where important pages are within three clicks of your homepage and quality content, initial indexing typically happens within days to weeks. But ranking improvements for competitive keywords usually take 3 to 6 months as Google evaluates user engagement signals and builds trust in your content. New sites face longer timelines than established sites with existing authority.

Should I update old content to improve rankings?

It depends on whether freshness matters for that specific keyword. Check if top ranking competitors have dates in their titles. If they do, freshness probably matters and updates could help. For queries like “best laptops 2026” freshness is critical. For evergreen topics like “how to change a tire” the date is irrelevant. Only update when you have genuinely new information to add, not just to change the publish date.

Do social signals like likes and shares directly affect Google rankings?

Social signals are not confirmed direct ranking factors. However, having active social media presence helps Google recognize your brand as a legitimate entity. Being mentioned on platforms like TikTok, YouTube, Instagram, and industry forums creates indirect benefits through brand signals, traffic generation, and increased awareness. Think of social presence as part of broader brand building that supports SEO rather than as a direct ranking lever.

Will Google penalize my site for using AI-generated content?

No. Google doesn’t penalize content based on how it’s created. They evaluate whether content is helpful and original regardless of the creation method. The issue is that raw AI outputs usually lack information gain because they synthesize existing information without adding new value. Use a human in the loop approach where AI handles structure and organization while you add unique insights, first hand testing, and original perspectives. That creates content that benefits from AI efficiency while maintaining the quality Google rewards.

Which Core Web Vital should I prioritize fixing first?

Focus on LCP or Largest Contentful Paint first because it has the biggest impact on user behavior. When your main content takes longer than 2.5 seconds to appear, bounce rates can increase by 30 to 40 percent based on what I’ve tracked across multiple sites. That increased bounce rate hurts rankings indirectly as Google sees users clicking back to search results quickly. Fix LCP before obsessing over the other Core Web Vitals metrics for maximum impact.

QUICK REFERENCE TABLE: All 47 Factors at a Glance

| # | Ranking Factor | Category | Priority |

|---|---|---|---|

| 1 | XML Sitemap Submission | Technical | Foundation |

| 2 | Robots.txt Configuration | Technical | Foundation |

| 3 | Crawlability | Technical | Foundation |

| 4 | Indexation Status | Technical | Foundation |

| 5 | HTTPS and SSL Certificate | Technical | Foundation |

| 6 | Mobile Friendliness | Technical | Foundation |

| 7 | Page Speed Baseline | Technical | Foundation |

| 8 | No Toxic Backlinks | Off-Page | Foundation |

| 9 | Canonical Tags | Technical | Foundation |

| 10 | No Broken Links | Technical | Foundation |

| 11 | Clean URL Structure | On-Page | Foundation |

| 12 | Basic On-Page Optimization | On-Page | Foundation |

| 13 | No Duplicate Content | On-Page | Foundation |

| 14 | Server Uptime | Technical | Foundation |

| 15 | Structured Site Navigation | Technical | Foundation |

| 16 | Search Intent Alignment | On-Page | Growth |

| 17 | Information Gain | On-Page | Growth |

| 18 | Content Depth | On-Page | Growth |

| 19 | Content Relevance | On-Page | Growth |

| 20 | Keyword Optimization | On-Page | Growth |

| 21 | Semantic Keywords | On-Page | Growth |

| 22 | Header Tag Structure | On-Page | Growth |

| 23 | Title Tag Optimization | On-Page | Growth |

| 24 | Meta Description | On-Page | Growth |

| 25 | Internal Linking Strategy | On-Page | Growth |

| 26 | Backlink Quality and Relevance | Off-Page | Growth |

| 27 | Referring Domain Diversity | Off-Page | Growth |

| 28 | Anchor Text Variation | Off-Page | Growth |

| 29 | LCP | Technical | Growth |

| 30 | CLS | Technical | Growth |

| 31 | FID | Technical | Growth |

| 32 | Image Optimization | On-Page | Growth |

| 33 | Topical Authority | On-Page | Advanced |

| 34 | Content Cluster Structure | On-Page | Advanced |

| 35 | E-E-A-T Signals | On-Page | Advanced |

| 36 | Author Expertise and Bio | On-Page | Advanced |

| 37 | About Page Optimization | On-Page | Advanced |

| 38 | Schema Markup | Technical | Advanced |

| 39 | Brand Signals Across Platforms | Off-Page | Advanced |

| 40 | Click Through Rate | UX | Advanced |

| 41 | Dwell Time | UX | Advanced |

| 42 | Bounce Rate Management | UX | Advanced |

| 43 | RankBrain Engagement | UX | Advanced |

| 44 | Page Experience Signals | Technical | Advanced |

| 45 | Crawl Budget Optimization | Technical | Advanced |

| 46 | LLM Feeder Link Quality | Off-Page | Advanced |

| 47 | Information Architecture | Technical | Advanced |